AWS re:Invent 2023 Notes

Posted on November 28, 2023 in Event Notes

AWS re:Invent 2023 is a learning conference hosted by AWS for the global cloud computing community. The in-person event features keynote announcements, training and certification opportunities, access to 2,000+ technical sessions, the Expo, after-hours events, and so much more.

Day 1 - 11/27/23

7:30PM Keynote address - Peter DeSantis - SVP, Utility Computing (AWS)

Peter DeSantis, continues the Monday Night Live tradition of diving deep into the engineering that powers AWS services.

Announcements:

Announcing Amazon Aurora Limitless Database

New capability that scales beyond the write limits of a single Aurora database while maintaining the simplicity of operating a single database.

- This helps make it easy for developers to scale apps.

- Saves builders MONTHS compared to building custom solutions

AWS ElastiCache Serverless

This will help customers create highly available caches in less time.

- Instantly scales vertically and horizontally to support even the most demanding applications

- No need to manage infrastructure

Amazon ElastiCache Serverless for Redis and Memcached

The new serverless option that allows customers to create a cache in under a minute and instantly scale capacity based on application traffic patterns. ElastiCache Serverless is compatible with two popular open-source caching solutions, Redis and Memcached.

Amazon Redshift Serverless with AI-driven scaling and optimizations

AI-driven scaling and optimizations in Amazon Redshift Serverless predicts workloads and automatically scales and optimizes resources to help customers meet price-performance targets

Day 2 - 11/28/23

8:30AM Keynote address - Adam Selipsky - CEO at Amazon Web Services (AWS)

Adam Selipsky, CEO of Amazon Web Services, shared his perspective on cloud transformation. He highlighted innovations in data, infrastructure, artificial intelligence and machine learning that are helping AWS customers achieve their goals faster.

He discussed the investments being made at all three levels of the GenAI stack. He announced the launch of several new serviceices that will help accelerate workloads and innovation their customer's businesses.

First up... Amazon Q: GenAI-powered assistant that is tailored to your business with specific plans for businesses and builders.

- AWS Console, Code Whisperer and in Slack channels

- AWS Architect skills

- Troubleshoot common errors from within the Cloudwatch Console

- Help developers with Feature development process

- Code transformation - ease the pain of upgrading code and meeting security compliance standards

- For business analysts, Amazon Q is available to interact with all your data in your enterprise securely, It is also available in Amazon Quick Sight, and you can use natural language to create and modify dashboards and create stories.

- Amazon Q in Connect personalizes its interactions to each individual user based on an organization's existing identities, roles, and permissions.

Amazon Bedrock: Lots of new capobilities in Bedrock.

- Guardrails: Get help with building apps in a responsible way (preview)

- Knowledge Bases: Securely connect foundation models (FMs) in Amazon Bedrock to your company data for Retrieval Augmented Generation (RAG) to work with your data (general availability)

- Agents for Bedrock: Automate prompt engineering and application orchestration (GA)

Infrastructure: We're deepening our collaboration with Nvidia, and unveiled two next-generation AWS-designed chip families (Graviton4 and Trainium2)

- We're offering the first cloud AI supercomputer with NVIDIA Grace Hopper Superchip and AWS UltraCluster scalability. Read about it here.

- Graviton4: This is the most powerful processor to date

- Trainium 2: Uses less energy and powers the HIGHEST performance compute on AWS for training FMs faster

- General availability of Amazon S3 Express One Zone

- Fastest data access speed in the cloud for most latency-sensitive storage.

- This new storage class can handle objects of any size, but it is especially awesome for smaller objects.

- Applications and allows customers to make millions of request per minute.

Data: Your data is your differentiator, and customers need to break down data silos. We're continuing our investments in a zero-ETL future.

- Three new zero-ETL integrations to Amazon Redshift: Amazon Aurora PostgreSQL, RDS for MySQL, and Amazon DynamoDBData is automatically connected (available in preview today).

- Amazon DynamoDB zero-ETL integration with Amazon OpenSearch Service lets you perform many types of full-text search, vector search, and more on your DynamoDB data by automatically replicating and transforming it without custom code or infrastructure.

Day 3 - 11/29/23

8:30AM Keynote address - Swami Sivasubramanian, Vice President, Data and Machine Learning (AWS)

Generative AI is augmenting our productivity and creativity in new ways, while also being fueled by massive amounts of enterprise data and human intelligence. Swami discovers how you can use your company data to build differentiated generative AI applications and accelerate productivity for employees across your organization. You will also hear from customer speakers with real-world examples of how they’ve used their data to support their generative AI use cases and create new experiences for their customers.

Announcements

Meta Llama 2 70B now available in Amazon Bedrock

You can now access Meta’s Llama 2 model 70B in Amazon Bedrock. The Llama 2 70B model now joins the already available Llama 2 13B model in Amazon Bedrock. Llama 2 models are next generation large language models (LLMs) provided by Meta. Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models from leading AI companies, like Meta, along with a broad set of capabilities.

Amazon Titan

- Multimodal Embeddings - Amazon Titan Multimodal Embeddings helps customers power more accurate and contextually relevant multimodal search, recommendation, and personalization experiences for end users.

- Amazon Titan Text Lite & Text Express - Amazon Titan Text Express and Amazon Titan Text Lite are large language models (LLMs) that help customers improve productivity and efficiency for an extensive range of text-related tasks, and offer price and performance options that are optimized for your needs.

- Amazon Titan Image Generator - Amazon Titan Image Generator enables content creators with rapid ideation and iteration resulting in high efficiency image generation.

Custom model program for Anthropic Claude

Anthropic’s Claude 2.1 foundation model is now generally available in Amazon Bedrock. Claude 2.1 delivers key capabilities for enterprises, such as an industry-leading 200,000 token context window (2x the context of Claude 2.0), reduced rates of hallucination, improved accuracy over long documents, system prompts, and a beta tool use feature for function calling and workflow orchestration.

Amazon Sagemaker HyperPod

Amazon SageMaker HyperPod, which reduces time to train foundation models (FMs) by up to 40% by providing purpose-built infrastructure for distributed training at scale.

Amazon SageMaker Innovations

New capability to support foundation model (FM) evaluations. AWS customers can compare, and select FMs based on metrics such as accuracy, robustness, bias, and toxicity, in minutes.

Vector engine for OpenSearch Serverless

Vector engine for OpenSearch Serverless is a simple, scalable, and high-performing vector database which makes it easier for developers to build machine learning (ML)–augmented search experiences and generative artificial intelligence (AI) applications without having to manage the underlying vector database infrastructure.

New vector search capabilities for

- Amazon DocumentDB and Amazon DynamoDB - Amazon DocumentDB (with MongoDB compatibility) now supports vector search, a new capability that enables you to store, index, and search millions of vectors with millisecond response times. Vectors are numerical representations of unstructured data, such as text, created from machine learning (ML) models

- Amazon Memory DB for Redis - new capability that enables you to store, index, and search vectors. MemoryDB is a database that combines in-memory performance with multi-AZ durability. With vector search for MemoryDB, you can develop real-time machine learning (ML) and generative AI applications with the highest performance demands using the popular, open-source Redis API.

Amazon Neptune Analytics

Neptune Analytics makes it faster for data scientists and application developers to get insights and find trends by analyzing graph data with tens of billions of connections in seconds. Neptune Analytics adds to existing Neptune tools and services such as Amazon Neptune Database, Amazon Neptune ML, and visualization tools. Neptune is a fast, reliable, and fully managed graph database

Amazon OpenSearch Service zero-ETL Integration with Amazon S3

Amazon OpenSearch Service zero-ETL integration with Amazon S3, a new way for customers to query operational logs in Amazon S3 and S3-based data lakes without needing to switch between tools to analyze operational data, is available for customer preview. Customers can boost the performance of their queries and build fast-loading dashboards using the built-in query acceleration capabilities of Amazon OpenSearch Service zero-ETL integration with Amazon S3.

Amazon Q generative SQL in Amazon Redshift

Amazon Redshift introduces Amazon Q generative SQL in Amazon Redshift Query Editor, an out-of-the-box web-based SQL editor for Redshift, to simplify query authoring and increase your productivity by allowing you to express queries in natural language and receive SQL code recommendations. Furthermore, it allows you to get insights faster without extensive knowledge of your organization’s complex database metadata.

AWS Clean Room ML

AWS Clean Rooms ML (Preview) helps you and your partners apply privacy-enhancing ML to generate predictive insights without having to share raw data with each other. The capability's first model is specialized to help companies create lookalike segments. With AWS Clean Rooms ML lookalike modeling, you can train your own custom model using your data, and invite your partners to bring a small sample of their records to a collaboration to generate an expanded set of similar records

Model Evaluation on Amazon Bedrock

Model Evaluation on Amazon Bedrock allows you to evaluate, compare, and select the best foundation models for your use case. Amazon Bedrock offers a choice of automatic evaluation and human evaluation. You can use automatic evaluation with predefined metrics such as accuracy, robustness, and toxicity. For subjective or custom metrics, such as friendliness, style, and alignment to brand voice, you can set up a human evaluation workflow with a few clicks.

Day 4 - 11/30/23

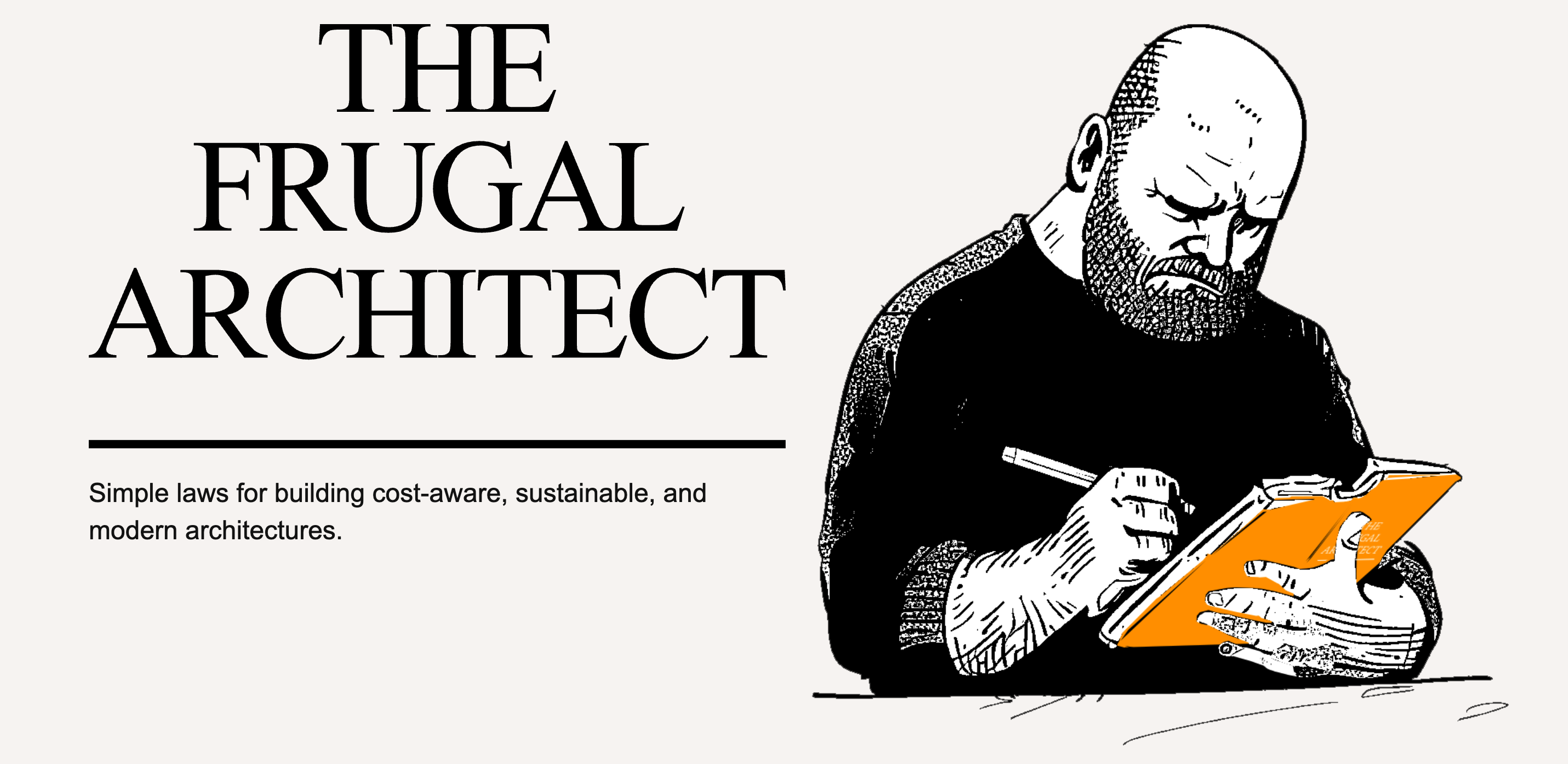

8:30AM CTO Keynote - Dr. Werner Vogels VP and CTO Amazon

Dr. Werner Vogels, Amazon.com's VP and CTO, joins us for his twelfth re:Invent appearance. In his keynote, he covers best practices for designing resilient and cost-aware architectures. He also discusses why artificial intelligence is something every builder must consider when developing systems and the impact this will have in our world

Announcements

The Frugal Architect

- Architect with cost in mind

- Cost is a close proxy/approximation for sustainability

Design

I. Cost is a Non-Functional Requirement - Consider Cost at Every Step

II. Systems that Last Align Cost to Business - Align cost and revenue

III. Architecting is a Series of Trade-Offs - Align your priorities

Measure

IV. Unobserved Systems Lead to Unknown Costs - Define your meter

V. Cost Aware Architectures Implement Cost Controls - Establish your tiers

Optimize

VI. Cost optimization is incremental - Continuously optimize

VII. Unchallenged Success Leads to Assumptions - Disconfirm your beliefs

Constraints breed creativity

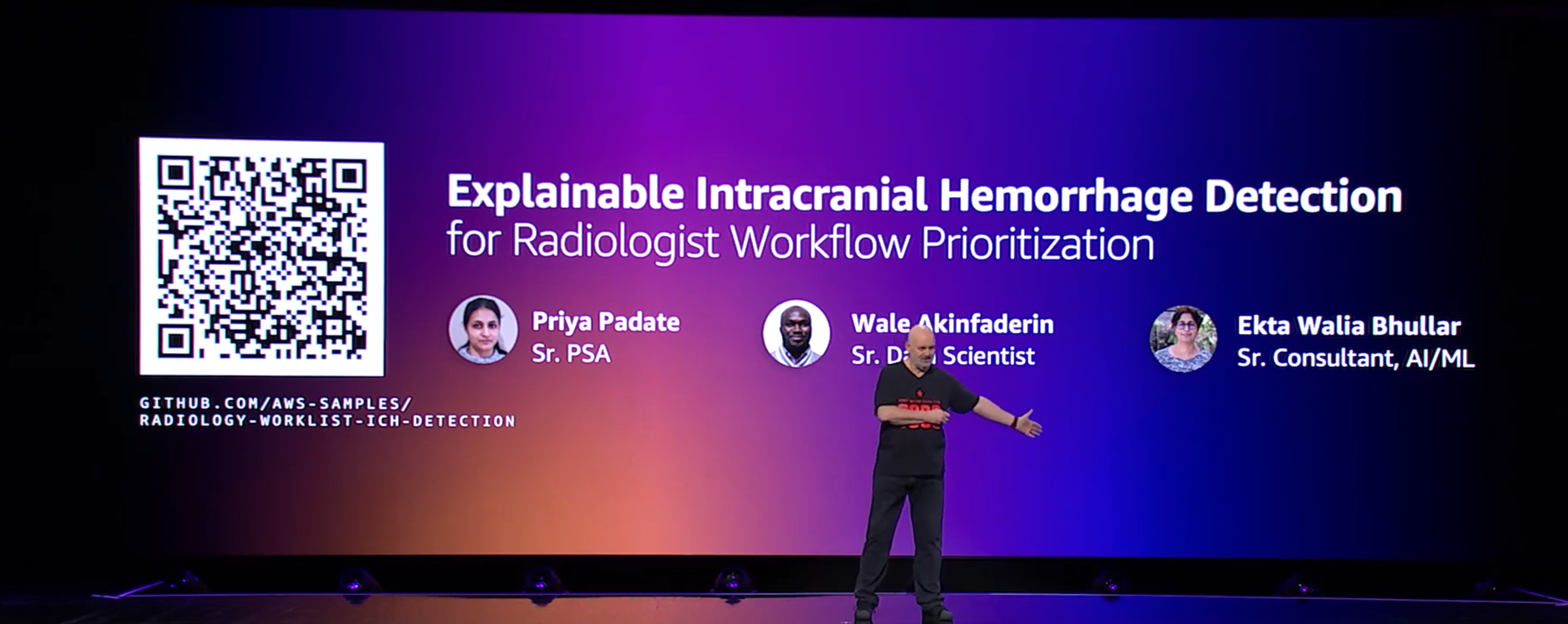

AI for Good

AI predicts. Professionals decide.

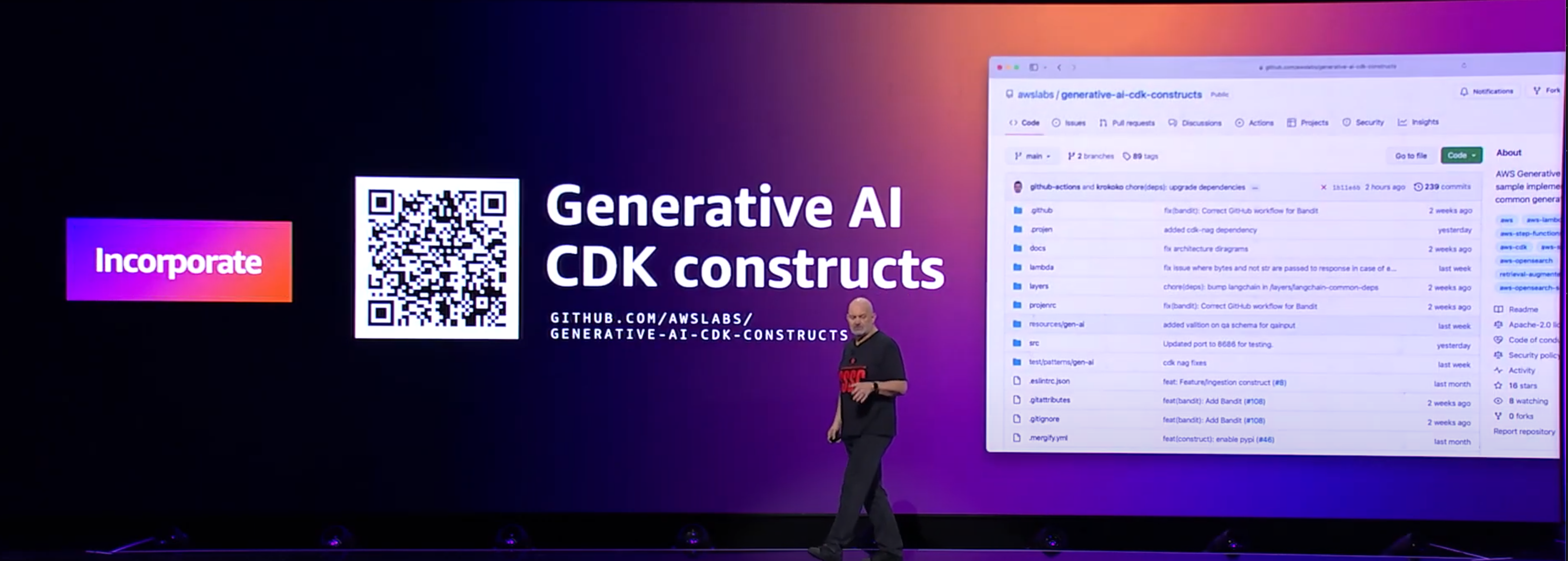

Building with Generative AI

Generative AI CDK constructs

Amazon Inspector - CI/CD Container Scanning

Amazon Inspector adds three new capabilities to increase the realm of possibilities when scanning your workloads for software vulnerabilities:

- Amazon Inspector introduces a new set of open source plugins and an API allowing you to assess your container images for software vulnerabilities at build time directly from your continuous integration and continuous delivery (CI/CD) pipelines wherever they are running.

- Amazon Inspector can now continuously monitor your Amazon Elastic Compute Cloud (Amazon EC2) instances without installing an agent or additional software (in preview).

- Amazon Inspector uses generative artificial intelligence (AI) and automated reasoning to provide assisted code remediation for your AWS Lambda functions.